# How AI Is Changing the Way We View Safeguarding

*Published: March 2026 · 4 min read*

---

## The sector is sitting on data it can't use

Children's residential care in England generates an enormous volume of safeguarding-relevant information every day. Incident logs, handover notes, Regulation 44 reports, workforce changes, notifications — all accumulating inside individual homes, in disconnected spreadsheets, Word documents, and email chains.

The information exists. The intelligence doesn't.

Between April 2024 and March 2025, Ofsted recorded 510 child protection concerns across 410 children's homes. It issued 75 restriction-of-accommodation notices, 36 suspensions, and 12 cancellation notices across 4,010 registered homes. 19% were operating without a registered manager.

These aren't numbers produced by a lack of effort. They're the output of a system that depends on manual oversight operating at a scale manual processes were never designed to handle.

---

## What AI actually does for children's homes

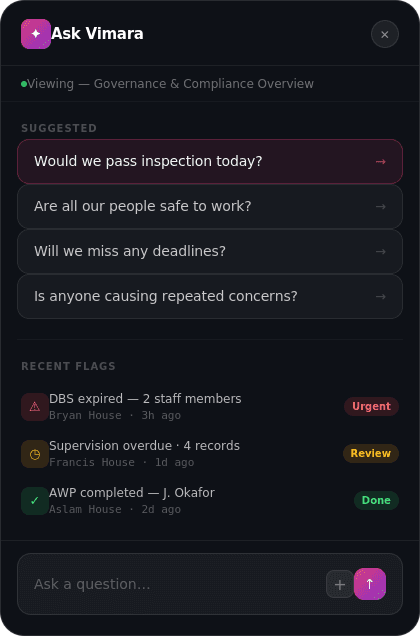

The public conversation about AI and children focuses on risk — deepfakes, AI-generated abuse material, chatbot dependency. Those are real concerns. But there's a second conversation: AI as the *infrastructure* that helps adults responsible for children do their jobs better.

Three capabilities matter most.

**Pattern recognition across fragmented records.** A registered manager overseeing multiple homes has hundreds of incident entries, rotas, and concern logs accumulating monthly. The human brain can't hold that volume and detect slow-moving patterns — a gradual rise in restraints at one home, a staff member accumulating low-level concerns across sites, a young person whose missing episodes are accelerating. AI surfaces what's already in the data but invisible to the person who needs it.

**Real-time compliance monitoring.** The Children's Homes Regulations 2015 create a dense web of operational requirements. Most homes track compliance using calendars and institutional memory. When a registered manager leaves — and 19% of homes don't have one — the compliance picture degrades fast. AI maintains a persistent, auditable view of what's due, what's overdue, and what's been evidenced.

**Faster escalation and decision support.** Working Together 2023 sets out referral pathways and threshold decisions. AI supports those decisions by surfacing relevant history, prompting the right questions, and logging the rationale — without making the decision itself.

---

## The financial case boards can't ignore

The NAO reported in 2025 that local authorities spent an average of £6,100 per child per week on children's home placements. A 12-week urgent Ofsted suspension — the standard initial period — puts approximately £395,000 at risk for a six-bed home at 90% occupancy. For a 12-bed home: £790,000. For a 20-bed home: £1.3 million.

That's before legal costs, remediation, staff replacement, and commissioning trust damage. HSE prosecution records show six-figure fines. The Lambeth Council redress scheme alone has paid over £100 million.

AI-driven safeguarding infrastructure doesn't eliminate the risk. It reduces the probability and severity of the events that generate it. That's an ROI case, not a cost case.

---

## Why the sector is behind — and why that's changing

Every other high-stakes regulated industry has built intelligence infrastructure to surface risk. Banking has KYC. Aviation has mandatory incident reporting with sector-wide learning loops. Food safety has HACCP. In children's care, incident reporting stays inside individual homes, learning is local, and there's no connected system tracking workforce safeguarding history across providers.

The gap isn't effort. It's infrastructure.

What's changed is the economics. Five years ago, building sector-specific AI for a market of 826 private operators would have been prohibitively expensive. Foundation models and modern data infrastructure have closed that gap. The 434 single-home operators and 309 running two to five homes — too many sites for a clipboard, too lean for enterprise compliance platforms — are exactly where this technology fits.

---

## The shift that matters

For decades, safeguarding has been treated as a training-and-culture problem. More training, updated policies, refreshed procedures. Those things matter. But they're not sufficient when underlying systems can't surface risk at the speed and scale the sector demands.

AI makes it possible to treat safeguarding as what it actually is: a systems-and-data problem that requires intelligence infrastructure.

Ofsted's renewed framework, grading safeguarding as "met" or "not met" from November 2025, reinforces this. Inspectors are assessing lived practice and culture — whether the safeguarding system actually works day-to-day, not whether the paperwork looks right during a visit. AI doesn't fabricate that evidence. It generates it as a natural byproduct of the work already being done.

510 concerns. 36 suspensions. A market growing at 15% annually, with most new homes opened by the smallest operators. The sector is scaling. The oversight infrastructure hasn't scaled with it.

That's what AI changes.

---

*Sources: Ofsted regulatory activity data (2024–25), Ofsted annual report (2024/25), NAO Managing Children's Residential Care (2025), CMA Children's Social Care Market Study, Children's Homes (England) Regulations 2015, Working Together to Safeguard Children 2023.*

# How AI Is Changing the Way We View Safeguarding

*Published: March 2026 · 4 min read*

---

## The sector is sitting on data it can't use

Children's residential care in England generates an enormous volume of safeguarding-relevant information every day. Incident logs, handover notes, Regulation 44 reports, workforce changes, notifications — all accumulating inside individual homes, in disconnected spreadsheets, Word documents, and email chains.

The information exists. The intelligence doesn't.

Between April 2024 and March 2025, Ofsted recorded 510 child protection concerns across 410 children's homes. It issued 75 restriction-of-accommodation notices, 36 suspensions, and 12 cancellation notices across 4,010 registered homes. 19% were operating without a registered manager.

These aren't numbers produced by a lack of effort. They're the output of a system that depends on manual oversight operating at a scale manual processes were never designed to handle.

---

## What AI actually does for children's homes

The public conversation about AI and children focuses on risk — deepfakes, AI-generated abuse material, chatbot dependency. Those are real concerns. But there's a second conversation: AI as the *infrastructure* that helps adults responsible for children do their jobs better.

Three capabilities matter most.

**Pattern recognition across fragmented records.** A registered manager overseeing multiple homes has hundreds of incident entries, rotas, and concern logs accumulating monthly. The human brain can't hold that volume and detect slow-moving patterns — a gradual rise in restraints at one home, a staff member accumulating low-level concerns across sites, a young person whose missing episodes are accelerating. AI surfaces what's already in the data but invisible to the person who needs it.

**Real-time compliance monitoring.** The Children's Homes Regulations 2015 create a dense web of operational requirements. Most homes track compliance using calendars and institutional memory. When a registered manager leaves — and 19% of homes don't have one — the compliance picture degrades fast. AI maintains a persistent, auditable view of what's due, what's overdue, and what's been evidenced.

**Faster escalation and decision support.** Working Together 2023 sets out referral pathways and threshold decisions. AI supports those decisions by surfacing relevant history, prompting the right questions, and logging the rationale — without making the decision itself.

---

## The financial case boards can't ignore

The NAO reported in 2025 that local authorities spent an average of £6,100 per child per week on children's home placements. A 12-week urgent Ofsted suspension — the standard initial period — puts approximately £395,000 at risk for a six-bed home at 90% occupancy. For a 12-bed home: £790,000. For a 20-bed home: £1.3 million.

That's before legal costs, remediation, staff replacement, and commissioning trust damage. HSE prosecution records show six-figure fines. The Lambeth Council redress scheme alone has paid over £100 million.

AI-driven safeguarding infrastructure doesn't eliminate the risk. It reduces the probability and severity of the events that generate it. That's an ROI case, not a cost case.

---

## Why the sector is behind — and why that's changing

Every other high-stakes regulated industry has built intelligence infrastructure to surface risk. Banking has KYC. Aviation has mandatory incident reporting with sector-wide learning loops. Food safety has HACCP. In children's care, incident reporting stays inside individual homes, learning is local, and there's no connected system tracking workforce safeguarding history across providers.

The gap isn't effort. It's infrastructure.

What's changed is the economics. Five years ago, building sector-specific AI for a market of 826 private operators would have been prohibitively expensive. Foundation models and modern data infrastructure have closed that gap. The 434 single-home operators and 309 running two to five homes — too many sites for a clipboard, too lean for enterprise compliance platforms — are exactly where this technology fits.

---

## The shift that matters

For decades, safeguarding has been treated as a training-and-culture problem. More training, updated policies, refreshed procedures. Those things matter. But they're not sufficient when underlying systems can't surface risk at the speed and scale the sector demands.

AI makes it possible to treat safeguarding as what it actually is: a systems-and-data problem that requires intelligence infrastructure.

Ofsted's renewed framework, grading safeguarding as "met" or "not met" from November 2025, reinforces this. Inspectors are assessing lived practice and culture — whether the safeguarding system actually works day-to-day, not whether the paperwork looks right during a visit. AI doesn't fabricate that evidence. It generates it as a natural byproduct of the work already being done.

510 concerns. 36 suspensions. A market growing at 15% annually, with most new homes opened by the smallest operators. The sector is scaling. The oversight infrastructure hasn't scaled with it.

That's what AI changes.

---

*Sources: Ofsted regulatory activity data (2024–25), Ofsted annual report (2024/25), NAO Managing Children's Residential Care (2025), CMA Children's Social Care Market Study, Children's Homes (England) Regulations 2015, Working Together to Safeguard Children 2023.*

# How AI Is Changing the Way We View Safeguarding

*Published: March 2026 · 4 min read*

---

## The sector is sitting on data it can't use

Children's residential care in England generates an enormous volume of safeguarding-relevant information every day. Incident logs, handover notes, Regulation 44 reports, workforce changes, notifications — all accumulating inside individual homes, in disconnected spreadsheets, Word documents, and email chains.

The information exists. The intelligence doesn't.

Between April 2024 and March 2025, Ofsted recorded 510 child protection concerns across 410 children's homes. It issued 75 restriction-of-accommodation notices, 36 suspensions, and 12 cancellation notices across 4,010 registered homes. 19% were operating without a registered manager.

These aren't numbers produced by a lack of effort. They're the output of a system that depends on manual oversight operating at a scale manual processes were never designed to handle.

---

## What AI actually does for children's homes

The public conversation about AI and children focuses on risk — deepfakes, AI-generated abuse material, chatbot dependency. Those are real concerns. But there's a second conversation: AI as the *infrastructure* that helps adults responsible for children do their jobs better.

Three capabilities matter most.

**Pattern recognition across fragmented records.** A registered manager overseeing multiple homes has hundreds of incident entries, rotas, and concern logs accumulating monthly. The human brain can't hold that volume and detect slow-moving patterns — a gradual rise in restraints at one home, a staff member accumulating low-level concerns across sites, a young person whose missing episodes are accelerating. AI surfaces what's already in the data but invisible to the person who needs it.

**Real-time compliance monitoring.** The Children's Homes Regulations 2015 create a dense web of operational requirements. Most homes track compliance using calendars and institutional memory. When a registered manager leaves — and 19% of homes don't have one — the compliance picture degrades fast. AI maintains a persistent, auditable view of what's due, what's overdue, and what's been evidenced.

**Faster escalation and decision support.** Working Together 2023 sets out referral pathways and threshold decisions. AI supports those decisions by surfacing relevant history, prompting the right questions, and logging the rationale — without making the decision itself.

---

## The financial case boards can't ignore

The NAO reported in 2025 that local authorities spent an average of £6,100 per child per week on children's home placements. A 12-week urgent Ofsted suspension — the standard initial period — puts approximately £395,000 at risk for a six-bed home at 90% occupancy. For a 12-bed home: £790,000. For a 20-bed home: £1.3 million.

That's before legal costs, remediation, staff replacement, and commissioning trust damage. HSE prosecution records show six-figure fines. The Lambeth Council redress scheme alone has paid over £100 million.

AI-driven safeguarding infrastructure doesn't eliminate the risk. It reduces the probability and severity of the events that generate it. That's an ROI case, not a cost case.

---

## Why the sector is behind — and why that's changing

Every other high-stakes regulated industry has built intelligence infrastructure to surface risk. Banking has KYC. Aviation has mandatory incident reporting with sector-wide learning loops. Food safety has HACCP. In children's care, incident reporting stays inside individual homes, learning is local, and there's no connected system tracking workforce safeguarding history across providers.

The gap isn't effort. It's infrastructure.

What's changed is the economics. Five years ago, building sector-specific AI for a market of 826 private operators would have been prohibitively expensive. Foundation models and modern data infrastructure have closed that gap. The 434 single-home operators and 309 running two to five homes — too many sites for a clipboard, too lean for enterprise compliance platforms — are exactly where this technology fits.

---

## The shift that matters

For decades, safeguarding has been treated as a training-and-culture problem. More training, updated policies, refreshed procedures. Those things matter. But they're not sufficient when underlying systems can't surface risk at the speed and scale the sector demands.

AI makes it possible to treat safeguarding as what it actually is: a systems-and-data problem that requires intelligence infrastructure.

Ofsted's renewed framework, grading safeguarding as "met" or "not met" from November 2025, reinforces this. Inspectors are assessing lived practice and culture — whether the safeguarding system actually works day-to-day, not whether the paperwork looks right during a visit. AI doesn't fabricate that evidence. It generates it as a natural byproduct of the work already being done.

510 concerns. 36 suspensions. A market growing at 15% annually, with most new homes opened by the smallest operators. The sector is scaling. The oversight infrastructure hasn't scaled with it.

That's what AI changes.

---

*Sources: Ofsted regulatory activity data (2024–25), Ofsted annual report (2024/25), NAO Managing Children's Residential Care (2025), CMA Children's Social Care Market Study, Children's Homes (England) Regulations 2015, Working Together to Safeguard Children 2023.*

The sector is sitting on data it can't use

Children's residential care in England generates an enormous volume of safeguarding-relevant information every day. Incident logs, handover notes, Regulation 44 reports, workforce changes, notifications — all accumulating inside individual homes, in disconnected spreadsheets, Word documents, and email chains.

The information exists. The intelligence doesn't.

Between April 2024 and March 2025, Ofsted recorded 510 child protection concerns across 410 children's homes. It issued 75 restriction-of-accommodation notices, 36 suspensions, and 12 cancellation notices across 4,010 registered homes. 19% were operating without a registered manager.

These aren't numbers produced by a lack of effort. They're the output of a system that depends on manual oversight operating at a scale manual processes were never designed to handle.

What AI actually does for children's homes

The public conversation about AI and children focuses on risk — deepfakes, AI-generated abuse material, chatbot dependency. Those are real concerns. But there's a second conversation: AI as the infrastructure that helps adults responsible for children do their jobs better.

Three capabilities matter most.

Pattern recognition across fragmented records. A registered manager overseeing multiple homes has hundreds of incident entries, rotas, and concern logs accumulating monthly. The human brain can't hold that volume and detect slow-moving patterns — a gradual rise in restraints at one home, a staff member accumulating low-level concerns across sites, a young person whose missing episodes are accelerating. AI surfaces what's already in the data but invisible to the person who needs it.

Real-time compliance monitoring. The Children's Homes Regulations 2015 create a dense web of operational requirements. Most homes track compliance using calendars and institutional memory. When a registered manager leaves — and 19% of homes don't have one — the compliance picture degrades fast. AI maintains a persistent, auditable view of what's due, what's overdue, and what's been evidenced.

Faster escalation and decision support. Working Together 2023 sets out referral pathways and threshold decisions. AI supports those decisions by surfacing relevant history, prompting the right questions, and logging the rationale — without making the decision itself.

The financial case boards can't ignore

The NAO reported in 2025 that local authorities spent an average of £6,100 per child per week on children's home placements. A 12-week urgent Ofsted suspension — the standard initial period — puts approximately £395,000 at risk for a six-bed home at 90% occupancy. For a 12-bed home: £790,000. For a 20-bed home: £1.3 million.

That's before legal costs, remediation, staff replacement, and commissioning trust damage. HSE prosecution records show six-figure fines. The Lambeth Council redress scheme alone has paid over £100 million.

AI-driven safeguarding infrastructure doesn't eliminate the risk. It reduces the probability and severity of the events that generate it. That's an ROI case, not a cost case.

Why the sector is behind — and why that's changing

Every other high-stakes regulated industry has built intelligence infrastructure to surface risk. Banking has KYC. Aviation has mandatory incident reporting with sector-wide learning loops. Food safety has HACCP. In children's care, incident reporting stays inside individual homes, learning is local, and there's no connected system tracking workforce safeguarding history across providers.

The gap isn't effort. It's infrastructure.

What's changed is the economics. Five years ago, building sector-specific AI for a market of 826 private operators would have been prohibitively expensive. Foundation models and modern data infrastructure have closed that gap. The 434 single-home operators and 309 running two to five homes — too many sites for a clipboard, too lean for enterprise compliance platforms — are exactly where this technology fits.

The shift that matters

For decades, safeguarding has been treated as a training-and-culture problem. More training, updated policies, refreshed procedures. Those things matter. But they're not sufficient when underlying systems can't surface risk at the speed and scale the sector demands.

AI makes it possible to treat safeguarding as what it actually is: a systems-and-data problem that requires intelligence infrastructure.

Ofsted's renewed framework, grading safeguarding as "met" or "not met" from November 2025, reinforces this. Inspectors are assessing lived practice and culture — whether the safeguarding system actually works day-to-day, not whether the paperwork looks right during a visit. AI doesn't fabricate that evidence. It generates it as a natural byproduct of the work already being done.

510 concerns. 36 suspensions. A market growing at 15% annually, with most new homes opened by the smallest operators. The sector is scaling. The oversight infrastructure hasn't scaled with it.

That's what AI changes.

Sources: Ofsted regulatory activity data (2024–25), Ofsted annual report (2024/25), NAO Managing Children's Residential Care (2025), CMA Children's Social Care Market Study, Children's Homes (England) Regulations 2015, Working Together to Safeguard Children 2023.